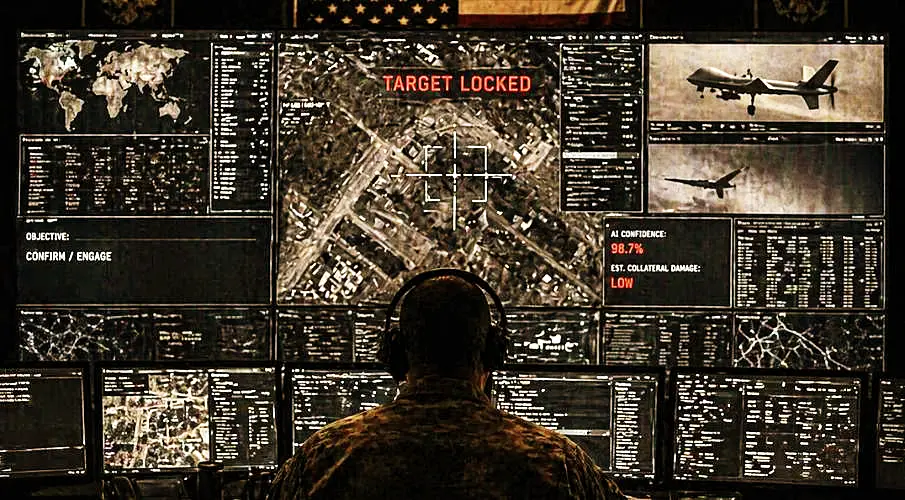

In the 1.5 trillion dollar budget request, a term appears that sounds harmless and yet, according to research, changes everything: Special Operations Autonomous Warfare Center. A center built precisely for what has long been prepared. Collect data, select targets, trigger strikes. The process is set, only the human still stands in between. For years, the US military has processed enormous amounts of information. Satellite imagery, communications data, movement profiles. What used to take days now happens in minutes. Programs draw connections, mark locations, set priorities. At the end, there is a point on a map. A target. What comes next decides life and death.

The units working with it are not new. Navy SEALs, Green Berets, Delta Force. Special operations forces that, since the attacks of September 11, have made targeted killing a central instrument. Capture, eliminate, dismantle structures. This logic has been built over two decades. Now it is being accelerated.

In the budget, it appears inconspicuously, almost hidden between columns of numbers and standard phrasing. But exactly there is what marks the real break. The United States Special Operations Command requests additional funding to expand the striking power of its units - more presence, more projection, more access. Behind it lies not just conventional rearmament, but the next step. The request explicitly names the creation of a Special Operations Forces Autonomous Warfare Center. A center that does not replace existing structures but compresses them. Intelligence, analysis, target selection - everything is pulled closer together, made faster, connected more directly.

The justification follows the familiar line. Growing threats, global deployments, increasing pressure on special forces. At the same time, it becomes clear where the system is shifting. No longer just support for operations, but the automation of those very processes. What is striking is what happens alongside it. While new capabilities are built, space disappears elsewhere. Programs for assessing civilian harm no longer appear in the request. The part that could evaluate and restrain is not continued. It is written in black and white in a document that hardly anyone reads. More funding for special forces. A new center for autonomous warfare. And less control over the consequences.

The new center does not change the method, but the speed. The infrastructure already exists. Intelligence, targeting, strike. What is missing is only time. That time is now being removed. The programs take over the part that was previously reviewed. The step from suspicion to decision becomes shorter. Officially, the human remains in the process. Admiral Brad Cooper says humans will always decide what is struck and what is not. But this is exactly where the contradiction lies. When systems analyze, compare, and mark in seconds, every delay becomes a problem. The human becomes the bottleneck. And a bottleneck is not permanently accepted in military processes.

Experience with drones shows where this leads. At first, it was said there as well that every operation would be carefully reviewed. Over time, the chain was compressed. Decisions became faster, processes more routine. In the end, often only confirmation remained. A click that completes the process, no longer questions it.

At first glance, it is only a small table. But right in the middle of administration, suicide prevention, and programs against sexual violence, the very item that is supposed to record and limit civilian harm disappears. Measures to limit civilian harm and respond to it still appeared the previous year with 259. After that, nothing. No expansion, no continuation, not even a remaining amount. This is not a technical process and not a minor cut. What is removed is precisely the area that even records what military force does to civilians. Everything else continues. This part does not.

At the same time, something else shifts. In the same budget, funding for programs that are supposed to track and limit civilian harm disappears. The very part that could warn is cut. The systems become faster, the corrective mechanisms fewer. The military logic behind it is clear. Opponents rely on scale. Drones, simple rockets, cheap systems in large numbers. The response is automation. More targets, more speed, more reactions in less time. In Ukraine and in the war with Iran, this has become visible. The challenge is no longer a single target, but many at once.

Other commands are already moving in this direction. The Southern Command in Miami automates the tracking of drug routes, where speedboats and mini submarines are treated as targets. The step from tracking to engagement is technically small. What is striking is how little this is discussed. In Congress, there has so far been no serious debate about such structures. The item appears in the budget, embedded in special programs, largely shielded. Decisions are made without it becoming publicly clear what they mean.

Stanley McChrystal describes the development openly. He compares the technology to a child that can already kill early and becomes stronger over time. What he does not say is that strength alone does not provide direction. Systems do not make moral decisions. They execute what they are given. The decisive question therefore remains simple and uncomfortable. If the machine decides faster than the human, who ultimately controls the process. And how long the human actually remains part of that decision.

To be continued .....

Updates – Kaizen News Brief

All current curated daily updates can be found in the Kaizen News Brief.

To the Kaizen News Brief In English